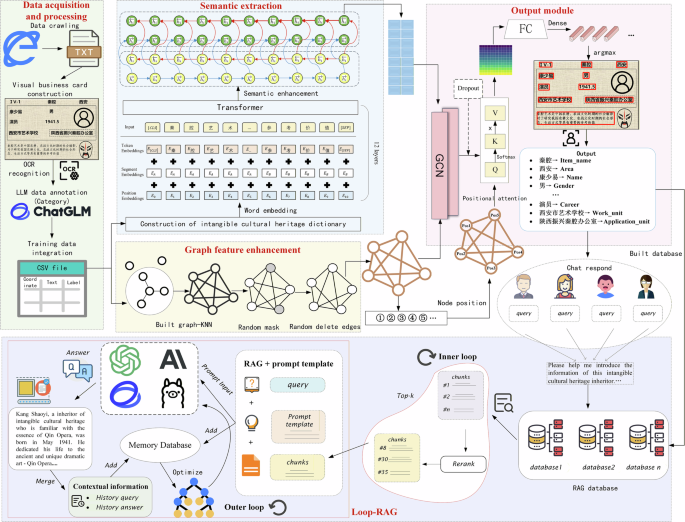

Visual information identification and Q&A of intangible cultural heritage inheritors by using enhanced Graph-Retrieval framework

Experimental environment and evaluation metric

The experiments in this study were conducted on a high-performance computing platform. The hardware environment included an Intel processor (2.40 GHz, 16 cores) and two NVIDIA GeForce RTX 4090 GPUs with 24 GB of video memory each, supporting large-scale parallel computing and deep model training. The software environment consisted of Windows 11, Python 3.9 as the primary programming language, and PyTorch 1.13.1 as the deep learning framework, with GPU acceleration enabled via CUDA 11.7.

The cross-entropy loss is selected as the loss function to measure the difference between the true label and the probability distribution predicted by the model. When there is a significant difference between the prediction results of the model and the true labels, the cross-entropy loss will assign higher loss values to these errors, thereby effectively guiding the model to better approach the values of the true labels. The specific calculation process is shown in formula (21).

$${\text{Cross}}\,{\text{entropy}}=\frac{1}{N}\mathop{\sum }\limits_{i}{L}_{i}=-\frac{1}{N}\mathop{\sum }\limits_{i}\mathop{\sum }\limits_{c=1}^{M}{y}_{ic}\,{\text{ln}}({p}_{ic})$$

(21)

Where \(M\) represents the number of classification categories, and \({y}_{{ic}}\) represents the sign function. Taking the binary classification task as an example (with labels of 0 or 1), if the predicted sample label is equal to the true category \(c\), \({y}_{{ic}}=1\), Otherwise, \({y}_{{ic}}=0\). \({p}_{{ic}}\) represents the probability that the predicted sample i belongs to category \(c\).

In addition, the confusion matrix, as an evaluation metric for measuring the performance of classification models, consists of four categories: true examples (TP); False positive cases (FP); False counterexample (FN); True Counterexample (TN). Based on the confusion matrix, the calculation of four basic evaluation index, namely precision (pre), recall, and F1 value, can be calculated as follows.

$$pre=\frac{TP}{TP+FP}$$

(22)

$$recall=\frac{TP}{TP+FN}$$

(23)

$${F}_{1}=\frac{2\times pre\times recall}{pre+recall}$$

(24)

Considering the imbalance in the number of different entity categories in the experimental dataset, macro average and weighted average indicators are added48. Macro average indicators address imbalanced datasets by giving equal attention to each category. Weighted average measure considers the sample size of each category, resulting in a more balanced contribution of large and small categories to the overall results.

For text generation evaluation, we adopt the commonly used metrics ROUGE-L, BLEU, and METEOR. ROUGE-L primarily measures recall based on n-grams, and BLEU emphasizes precision on n-grams. These metrics are suited for the report generation task, which emphasizes the completeness and linguistic quality of the generated text. In contrast, the Q&A task aims to assess whether the model provides correct answers to domain-specific ICH questions rather than text similarity. Therefore, precision, recall, and F1 are more appropriate, as they directly measure factual accuracy and answer correctness.

After extracting specific information from the visual business cards of ICH inheritors, the RAG strategy is applied to downstream tasks such as ICH report generation and intelligent Q&A, enabling richer and more personalized dissemination of ICH. The specific model configurations are presented in Table 3.

Training of the visual information extraction model

This section focuses on training and validating the Graph-Retrieval model for visual information extraction. The preprocessed ICH inheritor business card data is divided into training, validation, and testing sets in a 6:2:2 ratio. The training set ensures sufficient data for feature learning, the validation set is used to tune hyperparameters and optimize recognition performance, and the test set independently evaluates the model’s generalization ability.

To improve model performance, this study systematically tested multiple sets of hyperparameters and optimized them using grid search. The key parameters included learning rate, batch size, number of training epochs, optimizer type, number of network layers, word embedding dimension, chunk size and overlap rate, dropout rate, mask probability, edge deletion probability, and retrieval top-k value. The specific settings are listed in Table 3. Throughout the experimental process, the model followed a step-by-step workflow of semantic extraction, graph convolution, and feature enhancement, enabling it to effectively identify key information in the visual business cards of ICH inheritors. The detailed hyperparameter configurations used in the grid search are provided in Table 4.

Through extensive grid search, the optimal combination of hyperparameters was identified to minimize the loss of the Graph-Retrieval model on the 3142 training samples. The model achieved the best convergence under the following settings: a learning rate of 0.001, batch size of 64, 100 training epochs, a 12-layer Transformer architecture for RoBERTa, a 2-layer BiLSTM network, a 2-layer GCN, word embedding dimension of 512, random node masking probability of 0.05, and random edge deletion probability of 0.05. Under these conditions, the model’s loss curve remained stable and consistently converged toward the minimum value, as shown in Fig. 5.

Model training loss changes.

From the analysis of Fig. 5, it can be concluded that after 30 training epochs, the loss of the Graph-Retrieval model on the training set decreased steadily from 14.354 to 0.117, eventually stabilizing at this level. Throughout the entire training process, the model exhibited excellent stability, with a consistent decline in loss and no significant fluctuations. Notably, between the 20th and 30th epochs, the model showed clear signs of convergence, further demonstrating its robustness and efficiency during training.

Validation of the visual information extraction model

Although the Graph-Retrieval model performs well on the training set, its recognition performance must still be evaluated on the validation set, with potential adjustments to network parameters made based on the results. Accordingly, 1047 validation samples were input into the trained Graph-Retrieval model to assess its performance using precision (P), recall (R), and F1 score (F1). The recognition results are presented in the confusion matrix in Fig. 6.

The confusion matrix of model in the validation set.

As shown in Fig. 6, on the validation set, the Graph-Retrieval model achieved macro-averaged precision, recall, and F1 scores of 0.9313, 0.9304, and 0.9305, respectively. Overall, the model demonstrated strong recognition performance, with an average prediction accuracy exceeding 0.91. In particular, the highest accuracies were observed for category 4 (Gender) and category 6 (Birthday), both reaching 98%. This can be attributed to the relatively simple and short semantic content of gender information in category 4, and the distinct numeric patterns characterizing birthdate information in category 6. Consequently, the model achieves optimal predictive performance for these two categories. By contrast, accuracy was lower for category 3 (Name) and category 8 (Application_unit), with correct predictions of 93% and 89%, respectively. Category 3 involves personal names, which vary widely among ICH inheritors and thus increase prediction difficulty. Category 8 represents application unit information, which overlaps considerably with work unit information in category 7, leading to similar textual features and a reduction in prediction accuracy.

Testing of the visual information extraction model

To thoroughly assess the Graph-Retrieval model’s performance in recognizing ICH inheritors’ business card information on the test set, comparative experiments were conducted with several benchmark models. The results of these comparisons are summarized in Table 5.

BERTgrid22: Based on the grid method, the character encoding in Chargrid is replaced with word granularity BERT encoding, and information recognition is performed using a large-scale pre-trained language model.

VisualWordGrid24: Introduce visual information, overlay document images with grids, and extract text features.

BROS30: Combining the BERT model and embedding a relative position encoding into the self-attention mechanism to fully explore the deep connections between semantic and layout modalities.

GC-few shot31: A pretrained model based on RoBERTa and graph networks extracts semantic and layout information, while also incorporating a font encoding module.

Improved-GNN35: Visual image information is added during the initialization of document graph nodes, and the text embedding is combined with the corresponding visual image embedding to enhance the model’s representation ability.

Multimodal weighted graph37: Abstracting the document into a fully connected graph, embedding the semantic and layout information of each text segment into the graph structure, and fully integrating node information based on attention mechanism.

DocExtractNet49: A module-based framework utilizing LayoutLMv3 fully leverages the features of image and text modalities to extract key information from receipts.

Donut50: It adopts an end-to-end Transformer-based architecture to directly analyze document images, making them more accurate in handling different languages and formats.

Graph-Retrieval: The visual information recognition model proposed in this study combines semantic information with enhanced graph features for accurate prediction.

The experimental results in Table 5 clearly demonstrate that the Graph-Retrieval model proposed in this study significantly outperforms the benchmark models in recognizing visual information on ICH inheritors’ business cards. Specifically, the Graph-Retrieval model achieved excellent recognition performance, with macro-average F1 scores of 0.928. In comparison, the BERTgrid model showed poor performance in identifying the “Name,”“Work unit,” and “Application unit” categories, with average accuracy below 0.8. The VisualWordGrid method improves feature extraction by integrating grid layout and visual information, achieving a macro-average F1 score of 0.823, a 1.35% increase over BERTgrid. The BROS method enhances semantic recognition by incorporating position encoding embeddings with a pre-trained model. Although its macro-average F1 score reaches 0.826, it still does not fully capture the spatial layout relationships among node features, limiting its overall recognition performance.

Secondly, GC-FewShot introduces a search-word strategy and pre-training, resulting in a macro-average F1 score of 0.851, which is 3.03% higher than that of the BROS method. Improved-GNN further enhances performance by incorporating visual image information, combining text embeddings with the corresponding visual embeddings of each region. This approach not only accounts for spatial layout but also significantly strengthens the model’s feature representation capability, achieving a macro-average F1 score of 0.858. Donut, as an end-to-end visual document model, has a macro avg F1 value of 0.873, indicating that the pure vision Transformer architecture has a strong modeling ability in image-level semantic alignment. However, Donut only relies on visual encoders, and its ability to model the structural relationships between local text regions is limited. The M-Weighted Graph method introduces an attention mechanism in feature extraction to construct a weighted graph, effectively integrating semantic information with node relationship features. Compared to Improved-GNN, it further improves predictive performance, with a macro-average F1 score of 0.893, a relative increase of 2.29%. Furthermore, DocExtractNet makes full use of the features of image and text modalities, and the F1 value corresponding to its macro avg can reach 0.907.

Finally, the Graph-Retrieval model proposed in this study considers both semantic information and graph relationships, while enhancing robustness through random node masking, random edge deletion, and positional attention mechanisms. As a result, it achieves a macro-average F1 score of 0.928, which is 2.32% higher than that of DocExtractNet. This demonstrates that the fusion of semantic information and graph features not only achieves the best recognition performance but also ensures stable results across different categories.

Ablation experiment of visual information extraction

To further evaluate the contribution of each module in the Graph-Retrieval model to the overall network performance, this study conducted ablation experiments. These experiments compared recognition performance when removing semantic information, node masking, random edge deletion, and positional attention mechanisms. Using the constructed ICH inheritor summary lexicon as the basis, each ablation model is described below. The specific results of these experiments are presented in Fig. 7.

a The ablation experiment of the graph feature enhancement module. b The ROC curve.

W/O WB-EGraph: Semantic extraction uses RoBERTa’s lexicon to obtain more accurate word embeddings, and the GCN network has not undergone any graph feature enhancement.

W/O W-EGraph: The semantic extraction uses RoBERTa’s lexicon to obtain more accurate word embeddings. The BiLSTM network learns contextual information, while the GCN network does not undergo any graph feature enhancement.

W/O EGraph: The semantic extraction uses the summary lexicon of ICH inheritors, and the semantic extraction method is the same as above; However, no graph feature enhancement was performed during the process of graph structure learning.

W/O GCN1: The semantic extraction process is the same as above, and a random node masking is introduced in the graph feature enhancement process.

W/O GCN2: The semantic extraction process is the same as above, and the random edge deletion method is introduced in the graph feature enhancement process.

W/O GCN3: The semantic extraction process is the same as above, and the image feature enhancement process uses a positional attention mechanism.

Graph-Retrieval: The model proposed in this study combines semantic extraction with three graph enhancement methods: random node masking, random edge deletion, and a positional attention mechanism.

As shown in Fig. 7, the F1 value of W/O WB-EGraph is the lowest in the ablation experiment. Using W/O WB-EGraph as the benchmark, subsequent module additions progressively improved recognition performance, with the Graph-Retrieval model ultimately achieving the best predictive results. Specifically, W/O W-EGraph incorporates the BiLSTM network to enhance contextual semantic learning based on the pre-trained RoBERTa model, achieving an F1 value of 0.8566. Next, by introducing the proprietary vocabulary of ICH inheritors, W/O EGraph strengthened the training on specialized terminology and proper nouns, reaching an F1 value of 0.8767, a 2.35% improvement over W/O W-EGraph.

Furthermore, to capture spatial relationships in the visual business card data, graph feature methods were incorporated. With the addition of random node masking, the W/O GCN1 model showed a significant improvement, achieving an F1 value of 0.8915-1.68% higher than that of W/O EGraph. This demonstrates that random node masking helps the model learn from noisy features, enhancing its robustness to data imperfections such as wear and tear. The W/O GCN2 model employs random edge deletion, yielding an F1 value of 0.9061. Although its improvement is smaller than W/O GCN1, it enables the model to learn diverse graph structures and further strengthens robustness. By incorporating the sequential order of nodes, W/O GCN3 achieved the greatest performance gain, with an F1 value of 0.9167, 4.56% higher than W/O EGraph. Ultimately, the Graph-Retrieval model achieved optimal predictive performance by integrating semantic features with multiple graph feature enhancement strategies. Its ROC curve remained the highest, with an F1 value of 0.9299, representing an 11.6% improvement over the benchmark model W/O WB-EGraph.

The influence of model parameters on visual information extraction experiments

This section investigates the impact of various parameters in the graph feature enhancement module on the information recognition performance of the Graph-Retrieval model. Specifically, random node masking, random edge deletion, and the number of GCN layers are examined as the primary factors. Since the positional attention mechanism is automatically learned during training rather than manually configured, it is excluded from this parameter analysis. The parameter settings and corresponding experimental results are presented in Fig. 8.

Experimental results of parameter influence.

According to Fig. 8, when the random node masking probability is set to 0.05, the model achieves an F1 value of 0.9302 on the test set, demonstrating high recognition performance. In contrast, with a masking probability of 0.025, the F1 value drops slightly to 0.9287. This indicates that an appropriate masking probability enhances the model’s generalization ability, allowing it to maintain high predictive performance even with incomplete or damaged data. However, as the masking probability increases, node features are increasingly disrupted, resulting in incomplete feature learning; for example, at a masking probability of 0.3, the F1 value falls sharply to 0.8624.

Similarly, random edge deletion probability significantly affects model performance. When the edge deletion probability exceeds 0.05, predictive performance declines markedly. Specifically, at a probability of 0.07, the F1 value drops to 0.8838, a 1.95% decrease compared to 0.05. Within the range of 0.01 to 0.05, the F1 value remains relatively stable around 0.91, reaching 0.9299 at a probability of 0.02. This suggests that moderate edge deletion prevents the model from overfitting to a single graph structure, while excessive deletion damages the graph structure and impairs recognition performance.

Finally, the number of layers in the GNN network significantly affects model performance. When the GNN has two layers, the model achieves its best performance, with an F1 value of 0.9304. With only one layer, the recognition performance is limited, yielding an F1 value of 0.9169. As the number of layers exceeds two, model performance begins to decline; at four layers, the F1 value drops to 0.9114. This suggests that a two-layer GNN effectively captures node features and integrates higher-level abstract information, enhancing the model’s understanding of graph structure. In contrast, a single-layer GNN has limited capacity to learn complex relationships, while deeper networks may suffer from over-smoothing, where node representations become overly similar, potentially causing information loss.

Comparative experiments on public datasets of visual information extraction

To evaluate the generality and scalability of our method, we selected five publicly available datasets-SROIE, FUNSD, CORD, RVL-CDIP, and Business_Cards_Images, and compared our approach with four representative visual information extraction methods: BROS, GC-few shot, Multimodal Weighted Graph, and DocExtractNet. Furthermore, we also verified the influence of the number of ks in the k-nearest neighbor method on the extraction performance when constructing the graph matrix. The experiment takes the F1 value as the evaluation index. The specific results are shown in Table 6.

From the overall experimental results, Graph-Retrieval achieves the best performance on all five cross-domain visual information extraction datasets, demonstrating significant generalization ability and cross-scenario robustness. In the SROIE dataset, Graph-Retrieval achieved the highest F1 value of 0.919 under the k = 4 setting, significantly outperforming all benchmark methods. In the more structurally complex form dataset FUNSD, its F1 value reached 0.908, and the improvement rates compared with Multimodal Weighted Graph and DocExtractNet reached 9.8% and 8.6% respectively. This indicates that Graph-Retrieval also has certain feature modeling capabilities when dealing with semi-structured layouts and cross-text block relationships. In the complex ticket CORD dataset, the F1 value of Graph-Retrieval was further increased to 0.931. In the RVL-CDIP dataset, the performance of Graph-Retrieval reached 0.925 and achieved an improvement of over 10% on multiple benchmark models, demonstrating its robustness in a multi-layout document environment with significant layout differences. In the Business Cards Images dataset, the F1 value of Graph-Retrieval reaches 0.924. Overall, Graph-Retrieval demonstrates stable and consistent advantages in various structures, visual layouts, and cross-domain document scenarios.

In terms of graph structure construction strategies, different k values have a significant impact on model performance. When k = 4, it represents the regular trend of the optimal graph structure configuration. When k = 2, since each node is only connected to two neighbors, the graph structure is overly sparse, resulting in limited information dissemination capacity and relatively low overall performance of each model. As k increases to 3, the connectivity of the graph is enhanced, and performance generally improves. For instance, on the CORD dataset, it rises from 0.903 to 0.921, but it is still slightly below the optimal state. When k = 4, the Graph structure achieves the optimal balance between connectivity and noise control, enabling all models to achieve the highest performance. Particularly, Graph-Retrieval achieves peak performance in all datasets, such as 0.908, 0.931, and 0.925 on FUNSD, CORD, and RVR-CDIP, respectively. When k increases to 5, due to too many adjacent edges, some noisy connections are introduced, resulting in a slight decline in performance. For example, in the Business Cards dataset, it drops from 0.924 to 0.920. Overall, the experimental results verify that an appropriate neighborhood size is crucial for the quality of the graph structure. When k = 4, it can achieve a balance between the sparsity and expressive power of the graph, thereby maximizing the performance of the visual information extraction model.

Generation of ICH report

The above experiments verified the performance of the Graph-Retrieval model in visual information extraction. Based on the extraction results, this section uses LLMs to perform report generation and intelligent question answering tasks. Meanwhile, based on the powerful semantic capabilities of LLMs, the Loop-RAG enhancement strategy is adopted to introduce high-quality external knowledge. Therefore, different input templates have been set for each task. In this study, the test text is concatenated with Loop-RAG result as the input content, and the output results are obtained through multiple LLMs. Finally, the performance of the model is evaluated through the quantitative assessment of the output results by evaluation indicators. It is specifically shown in Fig. 9.

Where Prompt is the prompt word, Demonstration is the prompt example, Input is the input question, and the contextual information is the historical information in multiple rounds of Q&A. Ultimately, the LLMs generate more accurate and effective answers by combining the above content for retrieval.

LLMs can generate cultural background stories about ICH inheritors based on key information from visual business cards. For instance, they can integrate details such as inheritors’ skills, cities, and affiliated units to produce coherent cultural introductions suitable for promotional materials or exhibition displays. For this purpose, this study adopted Llama3, ChatGLM-4, GPT-4, and multiple benchmark models based on RAG. A total of 5237 ICH inheritors’ business cards were tested, with each model generating corresponding summary reports, including introductions of the inheritors, descriptions of their skills, and the status of the respective ICH.

For automated report generation tasks, performance was evaluated using BLEU, METEOR, and ROUGE-L metrics. It also includes the assessment of generation time, text length, and the number of inner and outer loop size. The report generation results of the benchmark models are presented in Table 7.

According to the results in Table 7, it can be seen that there are significant differences in the performance of the basic model in the task of generating intangible cultural heritage reports. Among them, GPT-4 achieved the highest scores in the three evaluation metrics of BLEU, METEOR and ROUGE-L, which were 26.40%, 26.90% and 27.50% respectively, demonstrating the best semantic expression ability and text organization ability. ChatGLM-4 came second. Although its BLEU decreased by 3.2% compared to GPT-4, it was still significantly better than Llama-3. The overall indicators of Llama-3 are relatively low, especially ROUGE-L, which is only 23.80%, indicating that there are still deficiencies in terms of content coverage and factual consistency.

On this basis, this study further introduces two types of retrieval enhancement strategies, namely the common RAG and Loop-RAG, to evaluate the impact of external knowledge injection on the generation quality. Ordinary RAG can already bring about certain performance improvements. For instance, the BLEU, METEOR, and ROUGE-L of RAG-Llama3, RAG-ChatGLM4, and RAG-GPT4 have all been enhanced compared to their corresponding base models. Among them, the improvement of RAG-GPT4 is the most stable. Its ROUGE-L has increased from 27.50% to 27.53%. However, such improvements mainly come from one-time retrieval enhancements, and the overall extent is limited.

In contrast, Loop-RAG further introduces the dynamic optimization mechanism of the inner and outer loops, making the gain of the generated quality more significant. Loop-rag-gpt-4 achieved the highest performance in this study, with its BLEU, METEOR, and ROUGE-L increasing by 27.10%, 27.60%, and 28.30% respectively, verifying the effectiveness of multi-round retrieval decisions and cross-task strategy optimization in factual reinforcement and contextual consistency. The enhancement for weaker models is more obvious. For example, the BLEU of Loop-RAG-Llama3 has increased from 21.50% of the basic model to 24.80%, with an increase of 3.3%, indicating that Loop-RAG is particularly suitable for compensating for the insufficient knowledge coverage of the basic model. Meanwhile, the performance improvement of Loop-RAG comes at the cost of computing. Both its generation time and text length have increased significantly. For example, the generation delay of Loop-RAG-Llama3 has risen from 15.3 s to 22.5 s, and the text length is approximately twice that of the base model. Although loop-Rag-GPT-4 performs 4 policy updates in the outer Loop and triggers an average of 4 inner loop searches, its generation time is 21.4 s, achieving a better balance between quality and efficiency.

Finally, the generated report for “Qinqiang Opera (秦腔)” is shown in Fig. 10. The left side displays the selected Loop-RAG knowledge base and the user requirements for report writing. The upper right corner illustrates the search process within the Loop-RAG database for each query. The report generation relies on the high-quality knowledge retrieved from this process. The bottom right corner presents the final generated report content. Overall, by leveraging the Loop-RAG strategy, the generated Q&A content is more diverse, and the answers are more accurate.

This is the report generation page. On the left is the constructed knowledge base, in the upper right corner is the knoeledge retrieval process, and in the lower right corner is the generated result.

Intelligent Q&A on ICH

This study tested Llama3, ChatGLM-4, GPT-4, RAG-based models and Loop-RAG-enhanced models, for question answering. During the experiment, questions about ICH inheritors were input into each model, and the accuracy of their responses was evaluated. Each model was tasked with answering 2000 common questions about ICH information, with a response considered correct if it matched the real information. Detailed accuracy results and sample Q&A content are presented in Table 8 and Fig. 11. Among them, the retrieval step represents the total number of complete and repeated steps in the entire retrieval chain, that is, the complete number of times the model undergoes retrieval, filtering, recombination, reflecting the efficiency of the model in locating answers in the external knowledge base.

According to the data in Table 8, the overall question-answering performance of the benchmark models Llama3, ChatGLM4 and GPT-4 is relatively stable, with their F1 values being 0.862, 0.844, and 0.879, respectively. However, due to the fact that these models failed to cover the latest knowledge of intangible cultural heritage during the pre-training stage, they are prone to factual bias when dealing with problems involving specific inheritors’ information, technical details or regional cultural practices, that is, they tend to give “illusory” answers. To alleviate the above problems, this study constructed a structured local knowledge base for intangible cultural heritage inheritors and introduced three types of enhanced retrieval mechanisms on this basis, including the common RAG and the Loop-RAG that further incorporates a loop optimization strategy. The experimental results of the common RAG show that the F1 values of all models have been improved compared with the benchmark model. Among them, the F1 values of RAG-Llama3, RAG-ChatGLM4 and RAG-GPT4 have increased to 0.875, 0.863, and 0.889, respectively. It is indicated that external knowledge retrieval can effectively supplement the domain knowledge blind spots of the model.

On this basis, the experimental results show that the Loop-RAG strategy has achieved significant improvements in precision, recall and F1 metrics. For example, the F1 value of Loop-RAG-Llama3 reaches 0.924, which is 7.19% higher than that of the benchmark model. The F1 value of Loop-RAG-ChatGLM4 has been raised to 0.908, an increase of 7.58%. The optimal model is Loop-RAG-GPT4, whose F1 reaches 0.941, which is 7.05% higher than the original GPT-4 model. Furthermore, the optimization process indicators presented in the table further verify the convergence behavior and knowledge fusion efficiency of Loop-RAG: Although Loop-RAG- GPT-4 goes through 2 inner loops and 4 outer loops, its overall retrieval path is the shortest, and the number of convergence rounds is 2, indicating that it can achieve the optimal effect at a lower optimization cost. In contrast, Loop-RAG-ChatGLM4 requires six retrieval steps and a longer convergence process, reflecting the differences in knowledge absorption efficiency among various models. Overall, the Loop-RAG- strategy has significantly improved the accuracy of the model in answering questions about intangible cultural heritage, especially when dealing with complex problems in intangible cultural heritage scenarios, the advantages are more obvious.

The question-answering case shown in Fig. 11 further demonstrates that with the support of hybrid retrieval and Loop optimization, loop-RAG can generate more complete and closely related answers to the knowledge system of ICH, providing a high-quality solution for knowledge question-answering in specific fields.

Few-shot test

Additionally, due to the limited information about ICH inheritors stored in the local knowledge base, LLMs often exhibit lower accuracy when answering unfamiliar questions. To evaluate the few-shot learning capability of the Graph-Retrieval model, 0-shot, 1-shot, 2-shot, and 3-shot experiments were conducted. The experimental setup involved providing the same type of prompt examples before inputting queries into the LLMs, enabling the model to reason effectively with fewer samples and handle previously unseen data. Following these steps, the performance of different benchmark models was tested under few-shot conditions. The results demonstrate that incorporating appropriate prompt examples can significantly improve question answering accuracy. The detailed experimental outcomes are presented in Fig. 12.

As shown in Fig. 12, increasing the number of prompt examples leads to improved performance. Compared with the 0-shot condition, under 3-shot conditions, the F1 values of Llama3, ChatGLM4, GPT-4, Loop-RAG-Llama3, Loop-RAG-ChatGLM4, and Loop-RAG-GPT4 increased by 6.21%, 5.48%, 5.65%, 5.17%, 4.94%, and 3.92%, respectively. Notably, the Loop-RAG-GPT4 model achieved an F1 value of 0.954 under the 3-shot condition, significantly outperforming all other benchmark models. This indicates that Loop-RAG can also make full use of the retrieval process in the few-shot environment and improve the accuracy value of the answers.

link